-

-

Notifications

You must be signed in to change notification settings - Fork 15.8k

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

low GPU-util and high CPU-util #5681

Comments

|

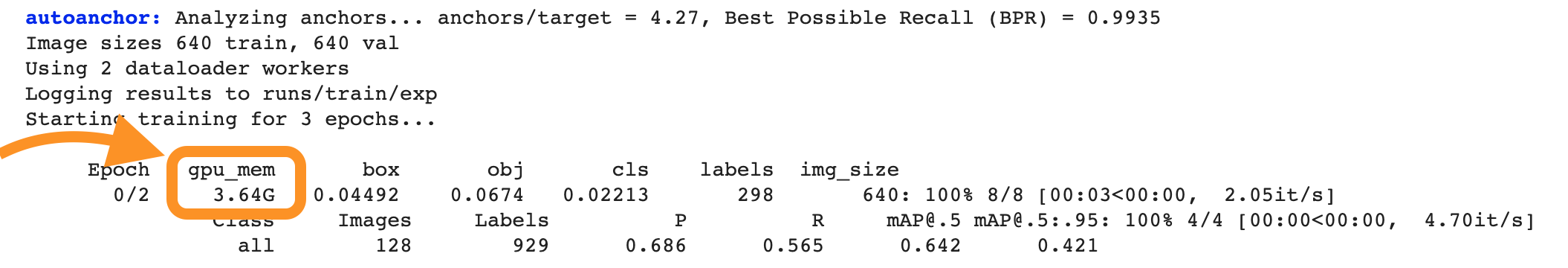

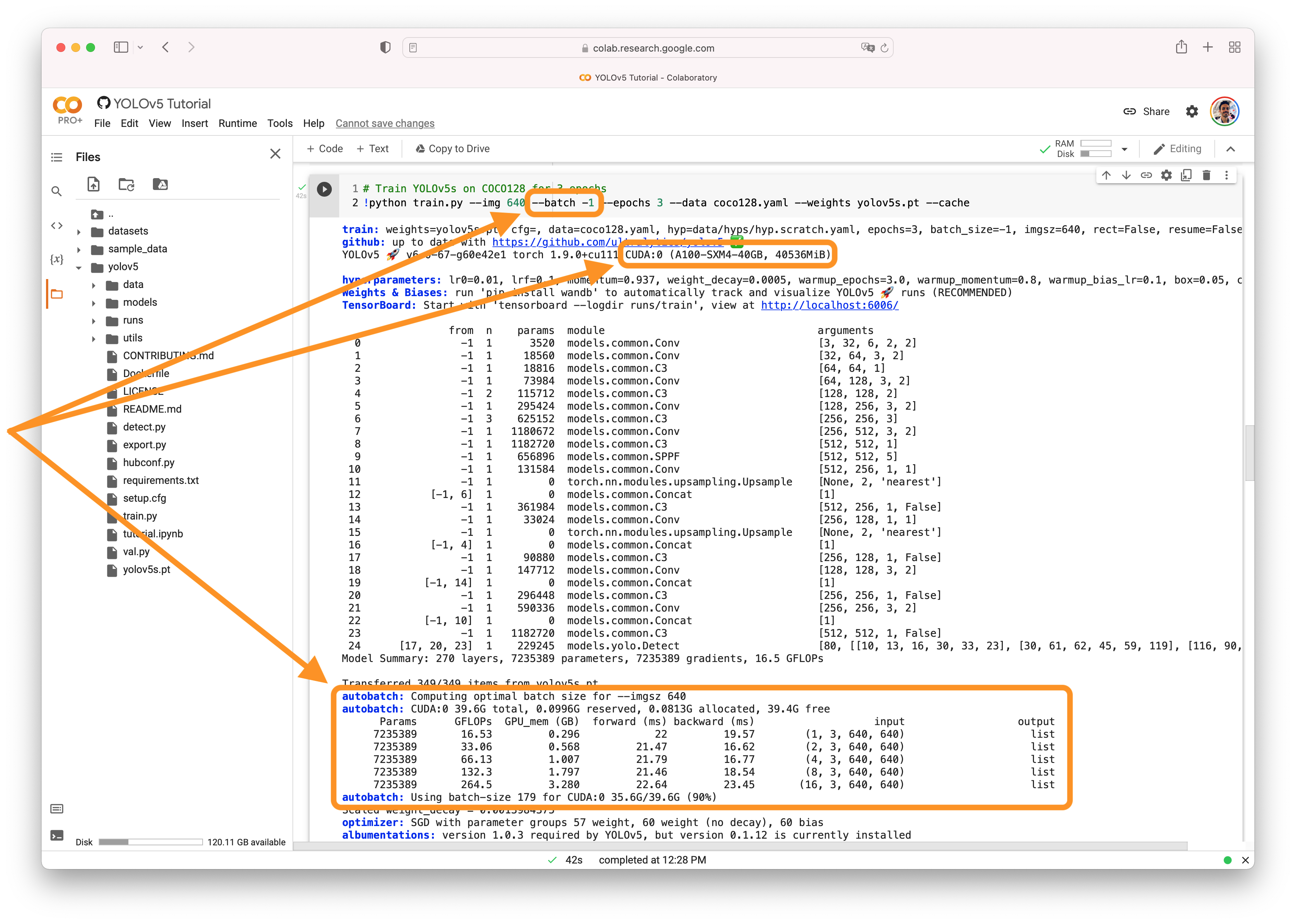

@learncrazy 👋 Hello! Thanks for asking about CUDA memory issues. YOLOv5 🚀 can be trained on CPU, single-GPU, or multi-GPU. When training on GPU it is important to keep your batch-size small enough that you do not use all of your GPU memory, otherwise you will see a CUDA Out Of Memory (OOM) Error and your training will crash. You can observe your CUDA memory utilization using either the CUDA Out of Memory SolutionsIf you encounter a CUDA OOM error, the steps you can take to reduce your memory usage are:

AutoBatchYou can use YOLOv5 AutoBatch (NEW) to find the best batch size for your training by passing Good luck and let us know if you have any other questions! |

|

Thank you for your answer. The program is working properly and there is no problem with cuda out of mem. When I looked at GPU utilization using nvidia-smi, I found that the value of GPU-util was unstable, 90% in a while and 0% in a while. This means that the GPU is not being well utilized. The answer I found was that when the GPU-util value was 0, the program was waiting for training data. |

|

@learncrazy yes that's correct. Low GPU utilization is a symptom of bottlenecks in the dataloader. Your images are not being read from your hard drive fast enough. You can try to cache your dataset: python train.py --cache ram

python train.py --cache disk |

|

@glenn-jocher Thank you for your answer. I got it. |

|

👋 Hello, this issue has been automatically marked as stale because it has not had recent activity. Please note it will be closed if no further activity occurs. Access additional YOLOv5 🚀 resources:

Access additional Ultralytics ⚡ resources:

Feel free to inform us of any other issues you discover or feature requests that come to mind in the future. Pull Requests (PRs) are also always welcomed! Thank you for your contributions to YOLOv5 🚀 and Vision AI ⭐! |

Search before asking

Question

I have a question about the training process. When I trained, I found that the GPU utilization of my computer was very low, and only in batch training did the gpu utilization increase instantaneously. It looks like the GPU is waiting for data to arrive during periods of low utilization.

Is this normal?

Additional

No response

The text was updated successfully, but these errors were encountered: